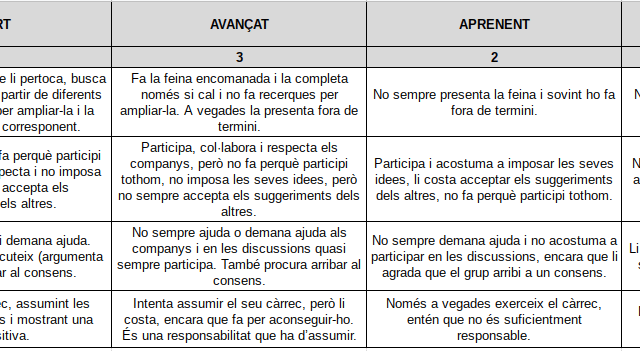

Analytical rubrics have been in vogue for a few years now. You talk to teachers at all levels and many of them use analytical rubrics. And it seems that by using these rubrics they are already doing formative and formative evaluation.

My experience, however, tells me quite the opposite. I am not as radical as a good friend of mine who says that the rubrics are obsolete, but she has a point.

Too many teachers have seen the rubric as a tool to improve grading. In an exam, it is easy to grade. Points and half points are assigned to the questions and the ones that the student has done well are added up. But with more competency-based activities (an oral presentation, a model, a video, etc.) it is more difficult. And many teachers have found the light with the analytical rubric. We assign several criteria with weights, add scores to each level and … done! It seems that the grading is justified.

I will not be the one to say that this is wrong and should not be done. But it has nothing to do with formative assessment. Some other friend has told me on occasion that this is where it starts. And I’m not saying no, and it’s always better to give marks this way with the analytical rubric than with a little number alone that is very difficult to justify because it is exactly that.

But, as I recently reaffirmed on Twitter (with some voices against it), I believe that the rubric is not useful for grading. It can be used and improve grading, but I think its function should be a completely different one. It should serve to be able to have clear criteria to be able to make, above all, co-assessments and self-assessments. Therefore, to be able to detect the aspects of a task or a skill that we have already achieved and those that we still have room for improvement. And, logically, to improve them and re-evaluate them later on.

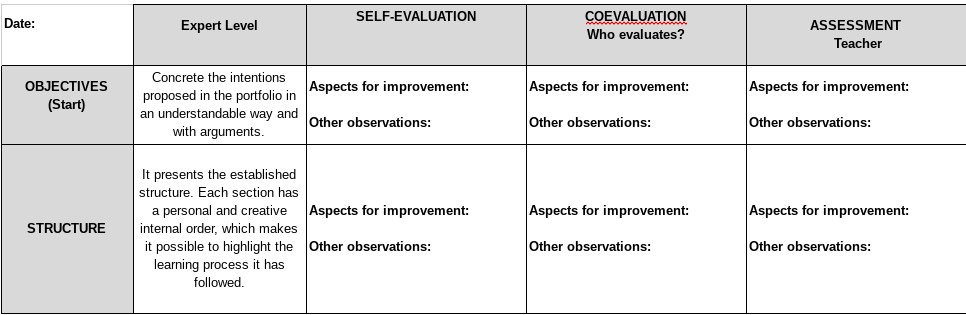

And why do I say that there is life beyond the analytical rubric? Because there are other instruments that, on many occasions, help us much more in this process. For example, the single-point rubrics, which I talked about in this article, are a much better instrument. It is true that these single-point rubrics are not used for grading, nor do we want them to be, and I like that. But they also provoke a reflection that too often does not occur with the automation of analytical rubrics. The student cannot mark a description (too often he/she does not read them and imagines a grading scale with Bad, Fair, Good, Very good), but has to write down those aspects that he/she has done well and those that he/she has not, based on clear criteria.

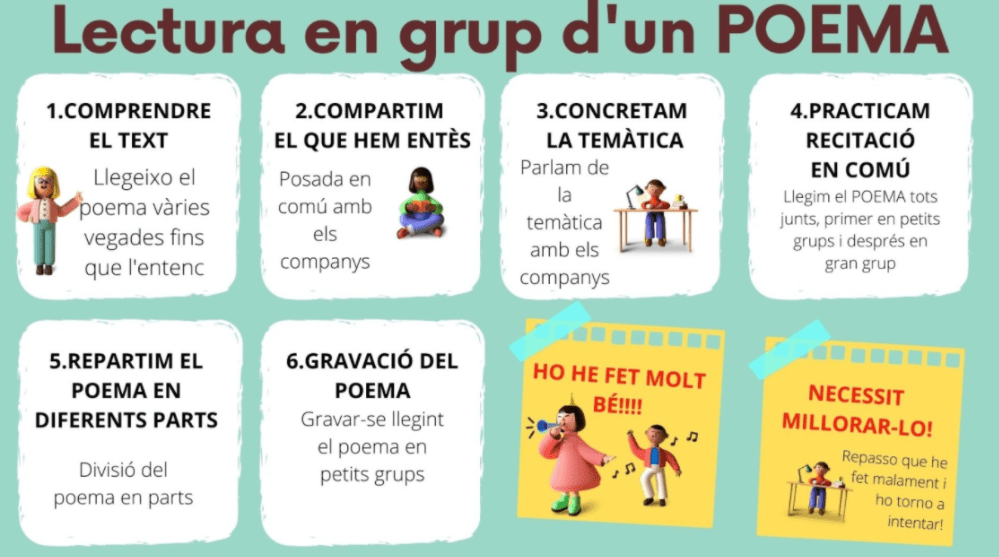

But we still have more. The orientation bases, for example.. Some will say that they are not an assessment tool, but guidelines for doing an activity. And that is what they are, but once they are done (if they are done by the students, the process is already an important source of learning), they are very useful for the student to review how he or she is carrying out the task. And that is evaluation.

And we continue. The checklists are another very good instrument. They are for checking much more specific aspects, but they are equally useful. The student only has to indicate whether or not he/she completes the criterion.

Surely we could find others (rating scales, observation charts, learning diagrams, etc.). In any case, the article only wanted to show that, apart from analytical rubrics, there are many other instruments that can be much more useful for accompanying students in their learning process, without qualifying them and giving them clear criteria to be able to assess and co-assess themselves, apart from receiving our feedback, of course.